AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Dbt airflow8/1/2023

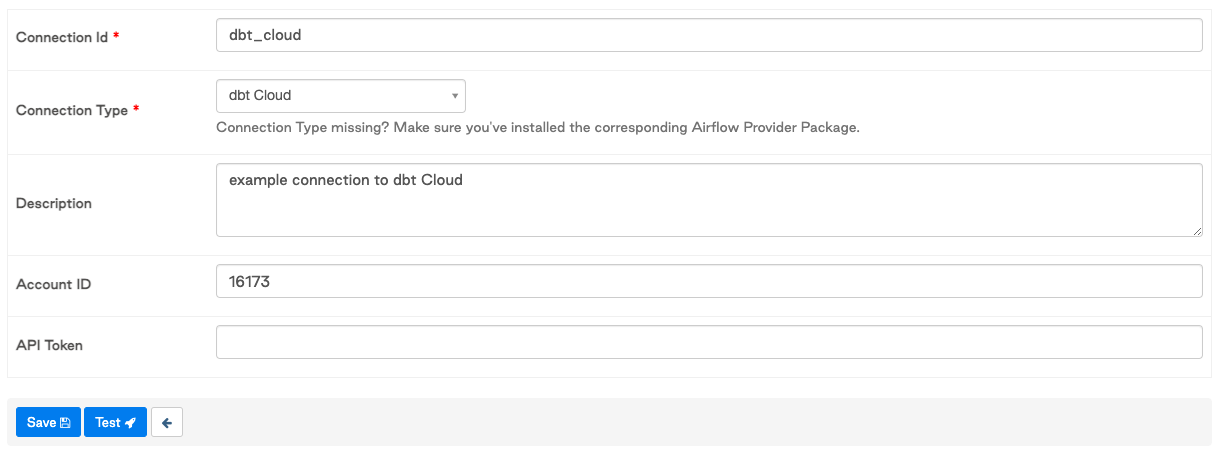

We followed Jostein Leira’s excellent Airflow setup guide to get the Airflow server up and running. We hosted the Airflow server on a virtual machine running in a VPC within Google Cloud Platform. Your dbt transformation process could evolve substantially while keeping this same architecture in place. Our use case is to use dbt to aggregate the average HP score across pokemon catches. We used a publicly available dataset on Pokemon for this exercise, saved in a Google Sheet. Access to a Google Cloud Platform account to host the Airflow server.The dbt Cloud Account ID, which is used in the API calls to dbt Cloud.Snowflake database to accept data loads and run the transforms.

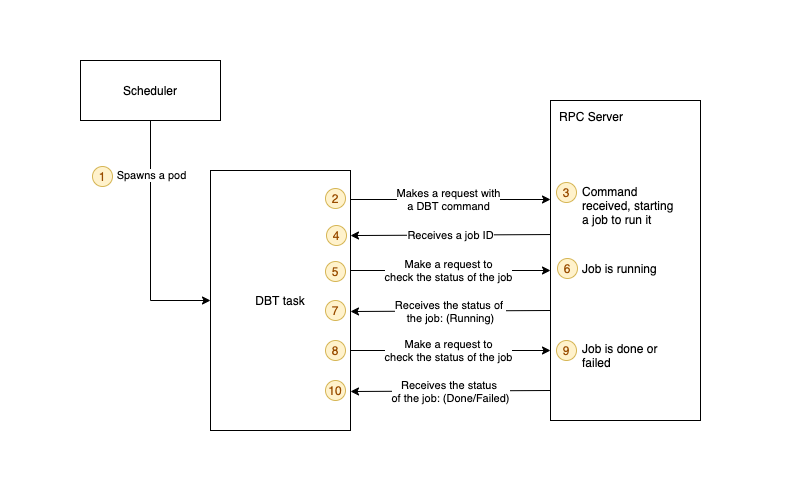

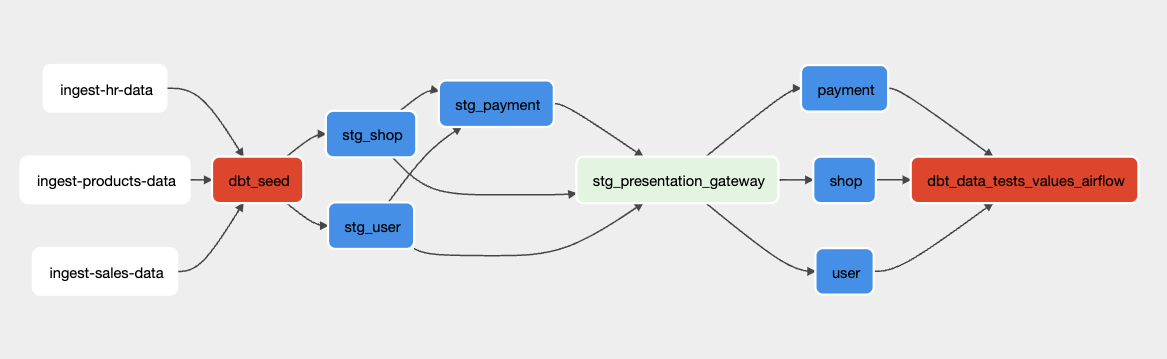

Navigate to Profile > API Access from the dbt Cloud console to generate this. This link documents how to create this value yourself. These are encoded into an api token in base64. To implement this yourself, at a minimum you will need access to the following: To be clear, we didn’t seek to implement a complete production-ready system for this task, but rather to create a starting point. You can find the codebase we implemented in writing this article here. Leveraged dbt’s native ability to parallelize job runs.Minimal re-work on the Airflow side as the dbt transformation and Fivetran processes grow in complexity over time.Isolation between the data loading (Fivetran), transformation (dbt) and orchestration (Airflow) functions in the stack.These include the ability to run Pull Request checks with dbt Test independently of Airflow use. That said, there are some additional benefits to using dbt Cloud. Our friends at Astronomer have a great series of blog posts featuring orchestration of dbt Core with Airflow. Note that an organization using dbt Core could accomplish a very similar workflow using the Airflow Bash Operator to trigger a dbt run. Our goal is to present a simplified, linear workflow and illustrate how to coordinate tasks using the Fivetran and dbt Cloud APIs with Airflow XComs. In that case, event-driven architectures like the one we describe in this article are key. However, many of the larger organizations (2,000+ employees) that and I work with as members of the Solutions Architecture team at Fishtown view these as high-priority items. If these factors are not a high priority, then it’s completely valid to use scheduled processes. These might include supplying fresh data for a machine learning model to consume, or reporting SLA requirements. It’s valuable to reduce overall latency of the load + transform process due to downstream operational dependencies.You want to pass parameters to the DAG run based on the outcome of the data loader(s).This can be particularly painful when a transformation job depends on multiple upstream data loaders. There are a large number of related load and transform jobs, making it difficult to manage their separate schedules.In particular, we’ve observed several concerns raised in the dbt Slack community: Setting up a system to trigger and manage events increases the complexity at first, however there are benefits that make the results worth the time spent. Airflow is a great tool for creating and running this type of workflow. Alternatively, they can be linked into an event-driven workflow.

On the one hand, the load and transform steps can be scheduled and run independently. Fivetran connectors and dbt jobs are one particularly common pairing that comes up, and Analytics Engineers typically face a choice in how to orchestrate the two. In the dbt community, a common question that comes up is how to sync dbt runs with one’s extract and loader tool.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed